7 Use Cases Proving the Business Value of Structured Video Data

7 Use Cases Proving the Business Value of Structured Video Data

- Last Updated: February 27, 2026

Anolytics

- Last Updated: February 27, 2026

Video annotation is the process of capturing actual events in sequence, with both spatial (what happens in a single frame) and temporal (how frames change over time) complexity. This involves teaching machines to "watch" and interpret videos in a way that captures the essence of events or actions in sequence, which is essential for developing smart systems.

Annotation is necessary to transform the video data into something valuable. The process is complex and requires specialized skills to extract valuable data by frame-by-frame labeling of objects, actions, events, and their relationships. Dependence on human skills delivers quality, whereas simulated video data annotation for machine learning ensures quantity; combining both accelerates reliable model performance.

This article will discuss how high-quality video training data are used across diverse industrial applications and why skilled data annotators and automation are crucial for producing structured datasets. Before we do, let's take a brief look at the video annotation process.

What Does the Video Annotation Process Involve?

Video annotation is a structured, multi-stage process that begins with the following five steps.

Stage 1: Data Preprocessing → Metric: Frame Consistency

Frame-to-frame consistency prevents distracting flickers, jitters, and visual artifacts that can ruin a viewer’s experience, especially those generated with AI. These video AI platforms need reference datasets as a framework to produce strong, structurally consistent output, ensuring that motion and appearance remain consistent. Thus, preprocessing, when done properly, removes inconsistencies for establishing visual uniformity.

Stage 2: Segmentation → Metric: Temporal Precision

Next comes video segmentation, which divides long video streams into manageable clips. These are represented as frame sequences based on time, events, or scenes. It ensures the model receives temporally meaningful data rather than unstructured footage.

Stage 3: Annotation & Temporal Tracking → Metric: Tracking Accuracy

Once segmented, annotation is applied. Annotators label objects, actions, or events across frames, enabling the model to learn both spatial features and temporal behavior. Crucially, annotations are linked across frames so the model can track the same object as it moves, changes shape, or becomes partially occluded.

Stage 4: QA → Metric: Annotation Accuracy

To ensure reliability, datasets then pass through quality assurance with human-in-the-loop oversight. Automated tools flag inconsistencies, while human reviewers validate edge cases, correct errors, and enforce annotation guidelines

Stage 5: Dataset Validation → Metric: Label Completeness

Finally, annotated data is validated and exported in model-ready formats, ensuring completeness, consistency, and compatibility with training pipelines.

Video labeling involves all these stages, guided by clear project rules, compliance requirements, and domain expertise. The result is a high-quality, representative dataset that accurately reflects real-world conditions. Such rigor comes from applying automated methods, which we will discuss in the next section.

Industries Benefiting from Video Annotation

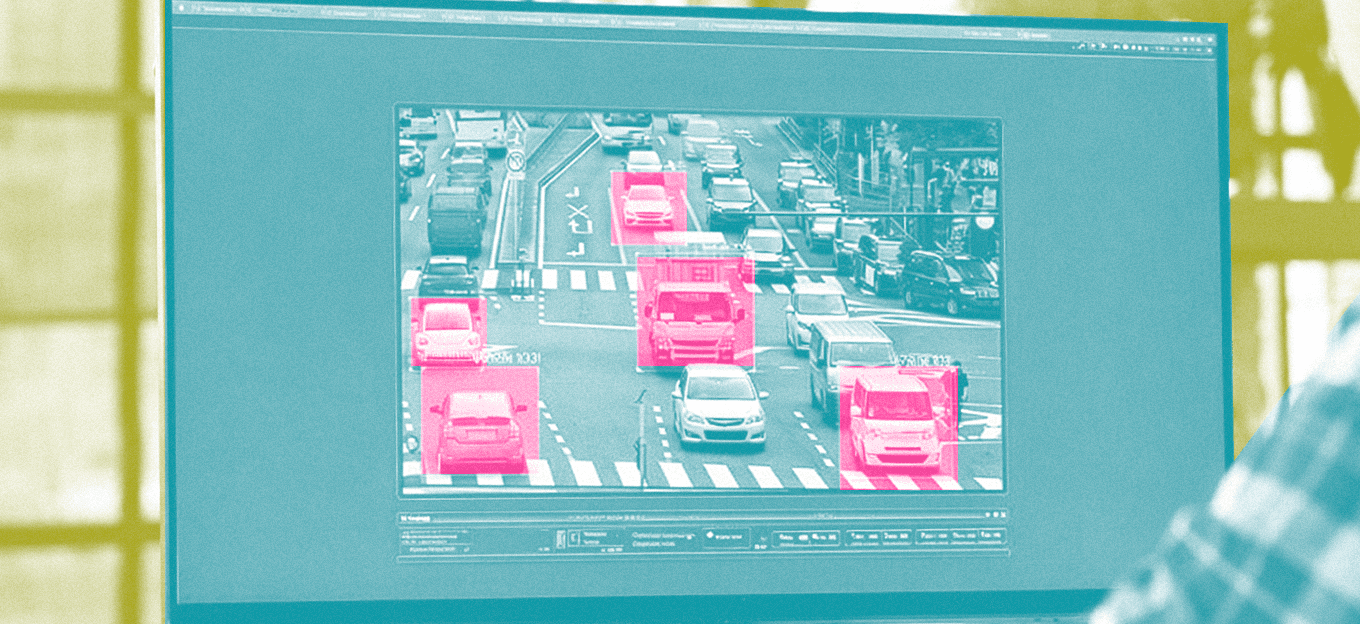

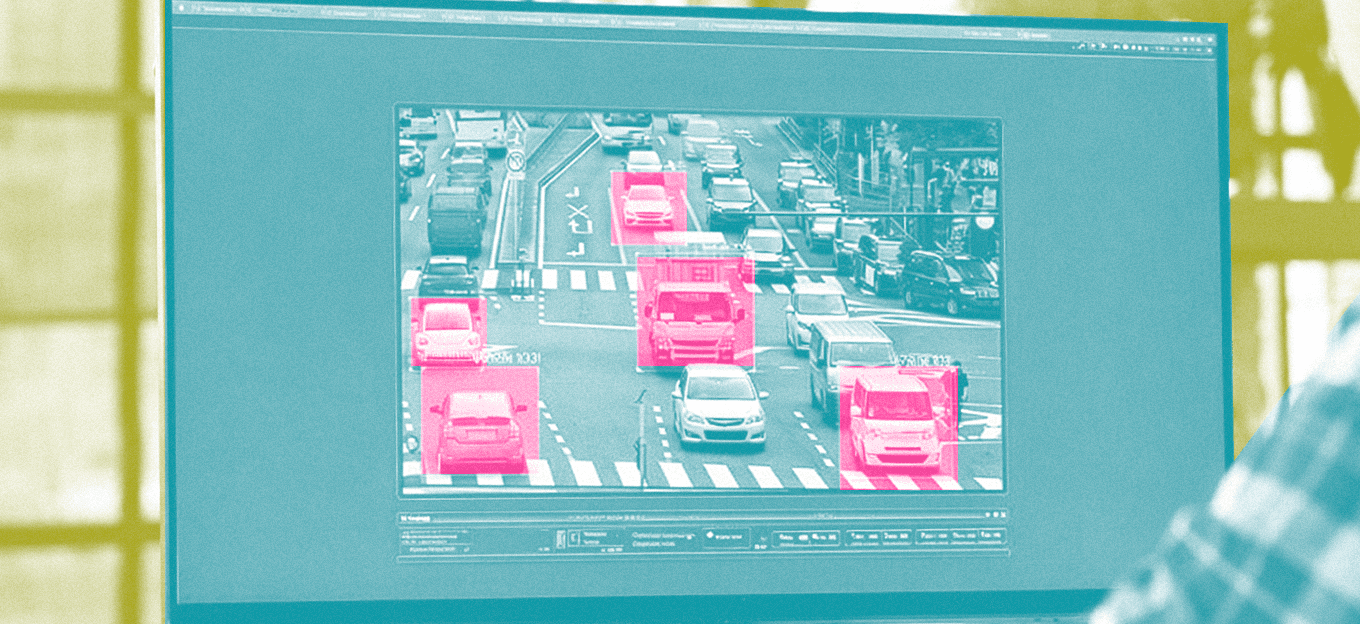

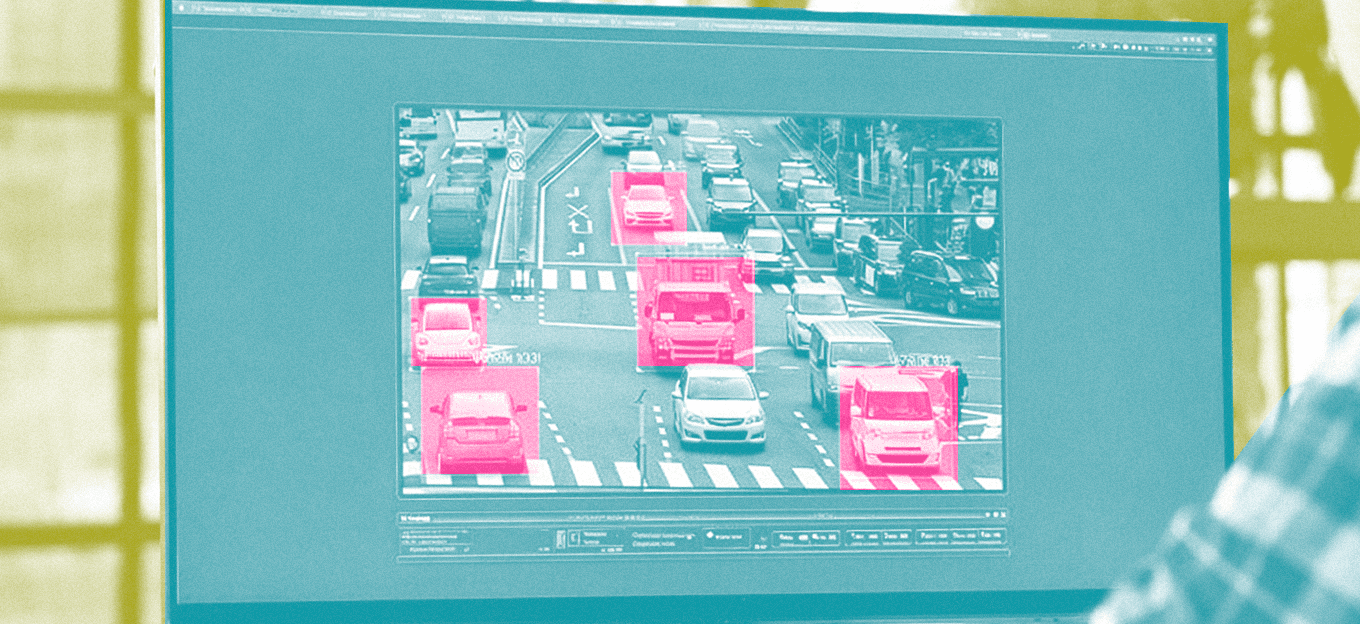

1. Smart surveillance and public safety systems

The operation of smart surveillance systems, along with public safety systems, depends on video data that requires proper annotation. The systems need to differentiate between abnormal activities and normal ones, such as analyzing how crowds should move from residential areas to government-specific locations where security is of prime importance. Annotated video-powered models identify actions and events more precisely, helping understand human behavior in specific situations beyond their ability to detect basic movements, such as perimeter security for campuses, factories, and warehouses, or pushing or striking someone with the intention to start a fight. Such behavioral analysis can be possible with AI camera monitoring systems.

2. Retail industry

The retail industry uses video annotation to analyze customer behavior and improve store management operations. Retailers should optimize their store layouts to create better customer experiences by understanding how customers move through stores, their behavior at checkout, and how they interact with product displays. This shopper-centric approach transforms technical analytics into actionable strategies that lead to operational success. The use of annotated video data enables machine learning models to produce superior results for dwell time measurement, foot traffic pattern detection, and engagement level assessment.

3. Sports Analytics

Video annotation technology used in sports analytics offers significant advantages. The tracking of player movements, tactical analysis, and key event detection, including passes, shots, and fouls, becomes possible through annotated video. Trainers and coaches can use it to improve players' performance, while broadcasters can use it for game highlights or analytics. It is also capable of advanced performance evaluation, automatic highlight production, statistical-based coaching choices, and fan interaction management.

4. Healthcare

Healthcare and medical video analysis are a highly specialized domain of video annotation. The correct timing of endoscopic footage and patient monitoring recordings enables healthcare professionals to identify surgical procedures and medical conditions. The process of high-quality video annotation in this field enables medical professionals to make better decisions, supports training simulations, and helps developers create AI systems that assist with medical diagnosis.

5. Robotics and industrial automation

The benefits of annotated video data include major improvements in robotics and industrial automation systems that learn through demonstration-based training methods. Robots trained on annotated human behavior from video feeds can learn to perform tasks, such as assembly, sorting, and material transportation. Furthermore, the ability to comprehend task sequences, gestures, and object interactions contributes to the development of more flexible autonomous robotic systems.

6. Media and entertainment

Video annotation in media and entertainment and in content management enables systems to understand video context across the entire video content. The annotated datasets enable users to perform scene detection and content classification while simultaneously detecting sensitive content that violates policy rules.

7. Autonomous Vehicles and ADAS

Video annotation is advancing the autonomous mobility of autonomous cars and advanced driver-assistance systems, enabling them to operate independently. Key to their functionality is camera feeds collected from test vehicles. Annotators first split the video content into frame sequences using bounding boxes, polylines, and segmentation masks. Video annotation is also used to capture non-ideal driving conditions, so models are trained on the same level of complexity they encounter on the road. Edge cases are deliberately annotated and reviewed, since these scenarios are often where perception systems fail.

How to Increase Visibility with Structured Data?

In all of the fields listed above, there is a need for temporal intelligence. The video annotation process provides this intelligence through organized training data. Structured data improves video visibility and ranking relevance in search results because it is compatible with machine learning. The quality of annotations enables models to learn from new situations and handle unusual cases more effectively, allowing them to identify patterns in sequential data rather than studying each instance separately.

Data annotation can address the quality training phase, but there is a need to find and organize content among billions of other videos. Thus, the quantitative aspect of acquiring training data leads us to consider outsourcing the work to a professional who can devise both automated and human methods for handling large volumes of training data.

Automation accelerates dataset turnaround time, but this does not undermine the need for human oversight. Automated systems can mislabel edge situations such as unique road layouts, occlusions, poor lighting, and unusual traffic patterns (in the case of traffic monitoring systems). These outputs are checked and improved by humans for accuracy and safety.

Well-executed AI projects help reduce uncertainty. Businesses need video annotation as their foundational measure to develop reliable machine learning systems that achieve high performance, as AI use for visual data analysis continues to expand.

Conclusion

Machine learning algorithms need video data in a structured form to perform more reliably in unseen scenarios, not just benchmark datasets. Video annotation for machine learning has become an essential strategic process due to the complexities of the real world. It is the motion, behavioral elements, and time-based information in video that make the process complex, necessitating the hiring of a specialist to create the labels.

Developers seeking to enhance model accuracy can outsource data labeling services to companies that implement a comprehensive annotation strategy combining task-specific annotation methods, deep domain expertise, and multi-layered quality control mechanisms.

AI projects that are properly executed with annotated video datasets enable models to identify patterns, predict results, and perform across industrial applications. The need for video annotation systems is increasing, and it is advisable to outsource these services to a professional partner that can enhance your project's capabilities.

The Most Comprehensive IoT Newsletter for Enterprises

Showcasing the highest-quality content, resources, news, and insights from the world of the Internet of Things. Subscribe to remain informed and up-to-date.

New Podcast Episode

Can AI Design IoT Hardware?

Related Articles